Global Collaboration Seeking worldwide partners

We invite global collaborators to jointly develop fusion datasets for autonomous driving and intelligent chassis, and algorithms for dataset development and utilization.

- Real-vehicle data collection and closed-loop verification platform

- In-house server: 8× NVIDIA RTX 5090 GPUs

- Broad industry partnerships for validation and deployment

We are recruiting interns in computer science, with a stipend of 100–300 RMB per day and support for industry and academic recommendations.

Interested in co-development, data partnership, or internships? Contact us

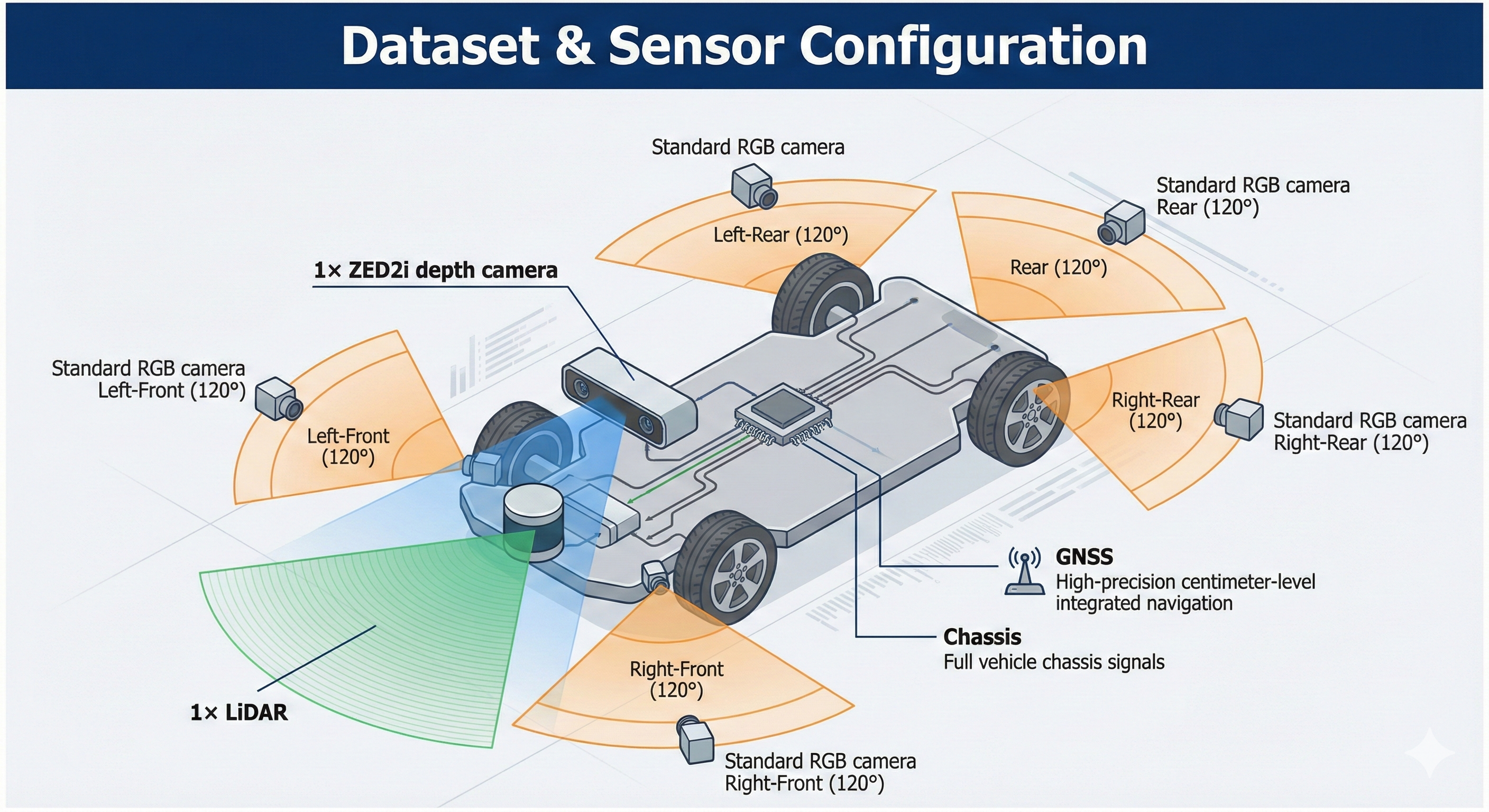

Sensor Configuration

- 5× Surround RGB cameras (front-left, front-right, rear-left, rear-right, rear-center)

- ZED 2i Stereo camera — left + right RGB with per-frame dense depth maps (.npy)

- LiDAR Forward-facing LiDAR point clouds (.npy) per frame

- GNSS High-precision GPS: north/east velocity, absolute heading, centimeter-level positioning

- Chassis Speed, yaw rate, raw IMU (X/Y acceleration) — direct side-slip angle derivation

Select a scene category to explore. Each episode contains fully synchronized multi-sensor streams — surround RGB, ZED stereo + depth, LiDAR, and vehicle dynamics — resampled to a uniform 4 Hz grid.

Scene Demos

Synchronized multi-sensor recordings across all scene categories. Select a category and clip to preview.

Team

Intelligent Chassis Team · School of Vehicle and Mobility, Tsinghua University

Shiyue Zhao · Yuhong Jiang · Xinhan Li · Chengkun He · Junzhi Zhang

Citation

@misc{extreme_driving_dataset_2026,

title = {Extreme Driving Dataset: Multi-Modal Episodes

for Critical and Adverse-Condition Driving},

author = {Shiyue Zhao and Yuhong Jiang and Xinhan Li

and Chengkun He and Junzhi Zhang},

howpublished = {Intelligent Chassis Team, School of Vehicle

and Mobility, Tsinghua University},

year = {2026}

}